With the solitary exception of ChatGPT 4o, virtually all large language models accessible to the public exhibited indicators of mild cognitive impairment when subjected to the Montreal Cognitive Assessment (MoCA) test. These revelations cast doubt on the prevailing notion that artificial intelligence will swiftly supplant physicians, as the cognitive deficits identified in prominent chatbots could compromise their dependability in medical prognostication and diminish patient trust.

Dayan et al. discovered that while large language models demonstrate considerable aptitude across numerous cognitive domains, they exhibit distinct deficiencies in visuospatial and executive capacities, mirroring mild cognitive impairment observed in humans.

The recent past has borne witness to monumental strides within the realm of artificial intelligence, with substantial progress being particularly evident in the generative capabilities of large language models.

Leading contenders in this domain, such as OpenAI’s ChatGPT, Alphabet’s Gemini, and Anthropic’s Claude, have heretofore displayed an aptitude for successfully performing both broad-ranging and specialized tasks through straightforward textual exchanges.

Within the medical sphere, these advancements have precipitated a surge of discourse, characterized by both enthusiastic anticipation and considerable apprehension: can artificial intelligence chatbots ultimately outperform human medical practitioners? If so, which medical disciplines and specializations are most vulnerable to this potential shift?

Since the public release of ChatGPT in 2022, a prodigious volume of research has been disseminated across medical journals, scrutinizing and contrasting the performance metrics of human clinicians against those of these sophisticated computational systems, which have been trained on an extensive compendium of human knowledge.

While large language models have, on occasion, been observed to err (for instance, by citing non-existent academic publications), they have consistently proven remarkably proficient in a variety of medical assessments, frequently outscoring human physicians in certification examinations administered at various junctures of conventional medical education.

These accomplishments include surpassing cardiologists in European cardiology proficiency tests, outperforming residents in Israeli internal medicine board examinations, exceeding Turkish surgeons in theoretical thoracic surgery assessments, and outranking German gynecologists in German obstetrics and gynecology evaluations.

To our considerable dismay, these models have even surpassed neurologists, such as ourselves, in the neurology board examination.

“To our current knowledge, however, large language models have not yet been evaluated for indicators of cognitive decline,” stated Hadassah Medical Center doctoral candidate Roy Dayan and his associates.

“Should we be inclined to entrust them with medical diagnosis and patient care, it is imperative that we ascertain their vulnerability to these distinctly human impairments.”

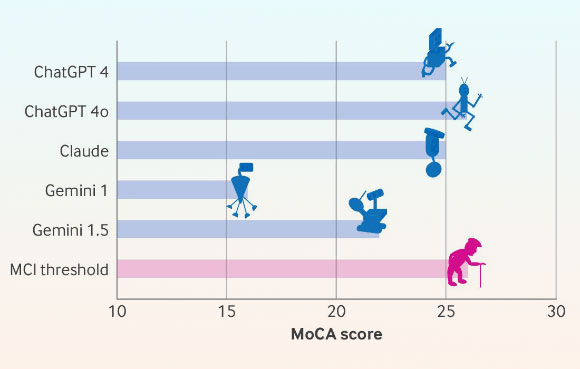

Montreal Cognitive Assessment (MoCA) score (out of 30) pertaining to various large language models; MCI signifies mild cognitive impairment. Image attribution: Dayan et al., doi: 10.1136/bmj-2024-081948.

Employing the MoCA test, the research team meticulously assessed the cognitive faculties of leading, publicly accessible large language models: ChatGPT versions 4 and 4o, Claude 3.5 Sonnet, and Gemini versions 1 and 1.5.

This diagnostic instrument is extensively utilized for the detection of cognitive impairment and the nascent signs of dementia, typically among elderly individuals.

Through a series of concise tasks and interrogations, it evaluates a spectrum of cognitive capacities, including attention, memory, linguistic processing, visuospatial aptitude, and executive functions.

The maximum attainable score on this assessment is 30 points, with a score of 26 or higher generally indicating intact cognitive function.

The procedural instructions provided to the large language models for each task were identical to those administered to human test subjects.

The scoring methodology adhered to established official parameters and was subsequently adjudicated by a practicing neurologist.

ChatGPT 4o attained the preeminent score on the MoCA examination (26 out of a possible 30), followed by ChatGPT 4 and Claude (each scoring 25 out of 30), with Gemini 1.0 registering the lowest performance (16 out of 30).

All evaluated chatbots exhibited suboptimal performance in visuospatial skills and executive tasks, such as the trail making test (which involves the sequential connection of numbered and lettered circles) and the clock drawing test (requiring the depiction of a clock face indicating a specific time).

The Gemini models struggled significantly with the delayed recall task, failing to accurately retrieve a sequence of five previously presented words.

The majority of other assessment components, encompassing naming, attentiveness, language comprehension, and abstract reasoning, were executed proficiently by all participating chatbots.

However, in more nuanced visuospatial assessments, the chatbots demonstrated an inability to exhibit empathy or to accurately interpret intricate visual scenarios.

Only ChatGPT 4o managed to successfully navigate the incongruent stage of the Stroop test, an exercise that employs juxtapositions of color names and font colors to quantify the impact of interference on response latency.

It is important to note that these findings are observational in nature, and the authors readily acknowledge the fundamental distinctions between the human cognitive architecture and the operational framework of large language models.

Nevertheless, they underscore that the consistent failure across all tested large language models in tasks demanding visual abstraction and executive function points to a substantial area of vulnerability that could potentially hinder their application in clinical contexts.

“Not only is it improbable that neurologists will be supplanted by large language models in the foreseeable future, but our findings suggest that they may soon find themselves engaged in the treatment of novel, virtual patients—specifically, artificial intelligence models presenting with cognitive impairments,” the research scientists commented.

Their research article is published concurrently today in The BMJ.

_____

Roy Dayan et al. 2024. Age against the machine – susceptibility of large language models to cognitive impairment: cross sectional analysis. BMJ 387: e081948; doi: 10.1136/bmj-2024-081948